MiniMax M2.7 Is Live on BlockRun — The First Self-Evolving Reasoning Model

MiniMax just dropped M2.7 — and it's live on BlockRun right now.

One API call. Pay per request. No subscription. No API key signup with MiniMax.

curl https://blockrun.ai/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "minimax/minimax-m2.7",

"messages": [{"role": "user", "content": "Hello"}]

}'

If you're still calling minimax/minimax-m2.5, it auto-redirects to M2.7. No code changes needed.

What Makes M2.7 Different

M2.7 is the first model MiniMax describes as deeply participating in its own evolution. It doesn't just run agent tasks — it builds and optimizes its own agent harnesses through recursive self-improvement loops.

In practice, that means:

- 97% skill adherence across 40+ complex skills (each exceeding 2,000 tokens)

- 30% performance gains from recursive harness optimization over 100+ iteration cycles

- Handles 30–50% of research workflows autonomously

This isn't a chatbot upgrade. It's a model that gets better at being an agent the more you use it as one.

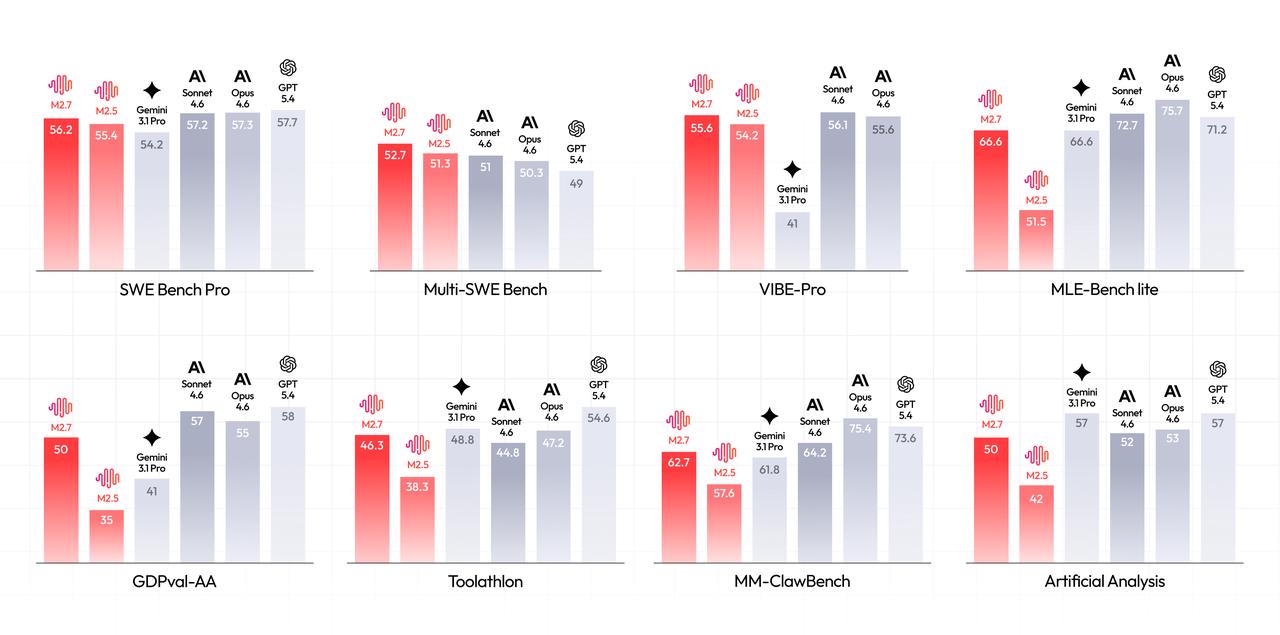

Benchmarks That Matter

Software Engineering

| Benchmark | M2.7 | Context |

|---|---|---|

| SWE-Pro | 56.22% | Matches GPT-5.3-Codex |

| VIBE-Pro | 55.6% | End-to-end project delivery |

| Terminal Bench 2 | 57.0% | Complex engineering systems |

| SWE Multilingual | 76.5 | Cross-language code tasks |

| Multi SWE Bench | 52.7 | Multi-repo engineering |

Machine Learning & Research

MLE Bench Lite (22 Kaggle-style competitions): 66.6% average medal rate — second only to Opus 4.6 (75.7%) and GPT-5.4 (71.2%). Best single run: 9 gold, 5 silver, 1 bronze.

Professional Productivity

| Benchmark | M2.7 | Context |

|---|---|---|

| GDPval-AA ELO | 1495 | Highest among open-source models |

| Toolathon | 46.3% | Tool use accuracy |

| MM Claw | 62.7% | Near Sonnet 4.6 level |

Production debugging benchmarks show incident recovery time under 3 minutes — SRE-level decision-making for log analysis, security audits, and system comprehension.

New in M2.7 vs M2.5

- Native Agent Teams — multi-agent collaboration built into the model, not bolted on

- Recursive self-improvement — the model optimizes its own harnesses over iteration cycles

- Character consistency — dramatically improved emotional intelligence for interactive apps

- Financial analysis — deep reasoning over complex financial documents and reports

Pricing on BlockRun

| Price | |

|---|---|

| Input | $0.30 / 1M tokens |

| Output | $1.20 / 1M tokens |

| Context window | 204,800 tokens |

That's 50× cheaper than Claude Opus and 12× cheaper than GPT-5.4 for output tokens — while matching their engineering benchmarks.

Pay per request with USDC on Base. No API key. No subscription. No minimum spend.

Try It Now

Direct API:

https://blockrun.ai/v1/chat/completions

Python SDK:

from blockrun import BlockRun

client = BlockRun()

response = client.chat("minimax/minimax-m2.7", "Explain this codebase")

TypeScript SDK:

import { BlockRun } from "blockrun";

const client = new BlockRun();

const response = await client.chat("minimax/minimax-m2.7", "Explain this codebase");

ClawRouter (drop-in OpenAI replacement for any framework):

export OPENAI_BASE_URL=https://blockrun.ai/v1

# Works with OpenClaw, LangChain, CrewAI, AutoGen — any OpenAI-compatible client

Read the full announcement from MiniMax: MiniMax M2.7 — Beginning the Journey of Recursive Self-Improvement